Humans are naturally equipped with fantastic visual and tactile capabilities, outstanding manipulative dexterity, and an ability rapidly to learn new tasks in a way that is still beyond our understanding. What we do know is that humans carry out advanced manipulation tasks using multi-modal sensory perception, combining their visual and tactile senses coordinated in the posterior parietal cortex of the brain. They learn new and complex manipulation tasks by combining their senses with previous experience, and by learning from demonstration and self-exploration.

State-of-the-art robots lack most of the outstanding manipulation, visual, tactile and learning skills of humans, and most research in robotics, and the resulting technologies, has been focused on the manipulation of rigid rather than compliant objects. The manipulation of 3D compliant objects is one of the greatest challenges facing robotics today because of the deep complexity involved in achieving real-time perception of shape deformation and the compliancy of objects, and the lack among robots of an ability to learn new and complex tasks. These challenges become even more acute if the objects are slippery, made of soft tissue, or have irregular shapes.

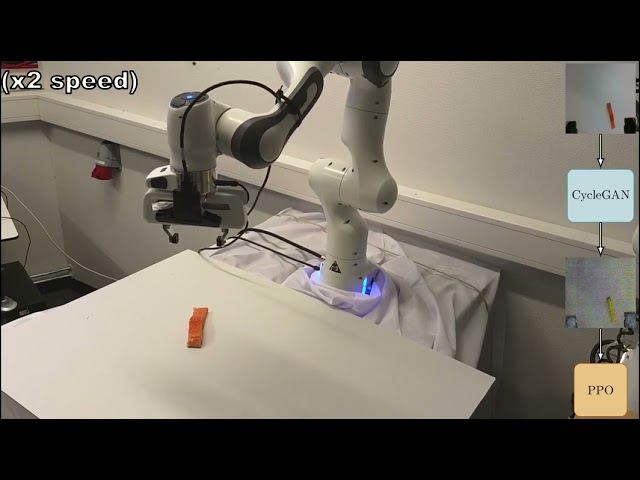

The GentleMAN project addresses these challenges by developing a technology that equips robots with advanced manipulation skills that reproduce human-like movements and fine motor skills. Our research focuses on the development of new technologies for the robotic manipulation of 3D compliant objects based on advanced vision, touch and learning capabilities aided by artificial intelligence. The project will deliver new knowledge and technologies enabling robots to become commonplace in a variety of future industrial and settings, carrying out advanced manipulation tasks performed currently only by humans. The focus is on compliant object of marine origin.

Financed by: IKTPLUSS, Research Council of Norway.